|

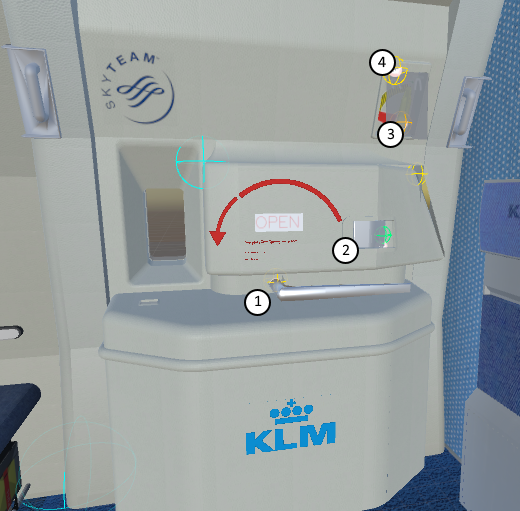

The door of the Boeing 777 has four interactable objects and all of them have to trigger something else when they are turned beyond a certain point. The main handle is the openening and closing handle of the door. It turns over 180 degrees from right to left. Halfway through the whole door should be lifted up from it's hinge and once pulled all the way over the door should open. This is four events for this handle alone. From right to left: lift hinge, open door. From left to right: drop hinge, lock door. The airlock handle is the handle that locks the door in place once it's opened so a gust of wind can't accidentally push the door back to it's closed position. This handle has only one event that can be fired when the door is completely opened. Once pulled it should close the door after which the main handle takes over.

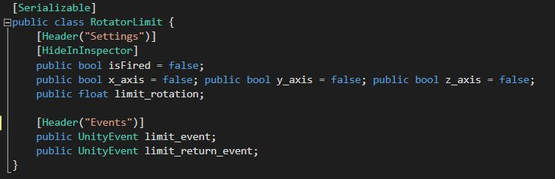

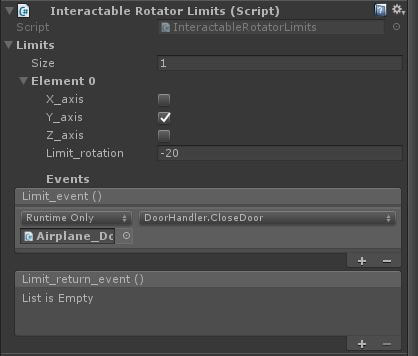

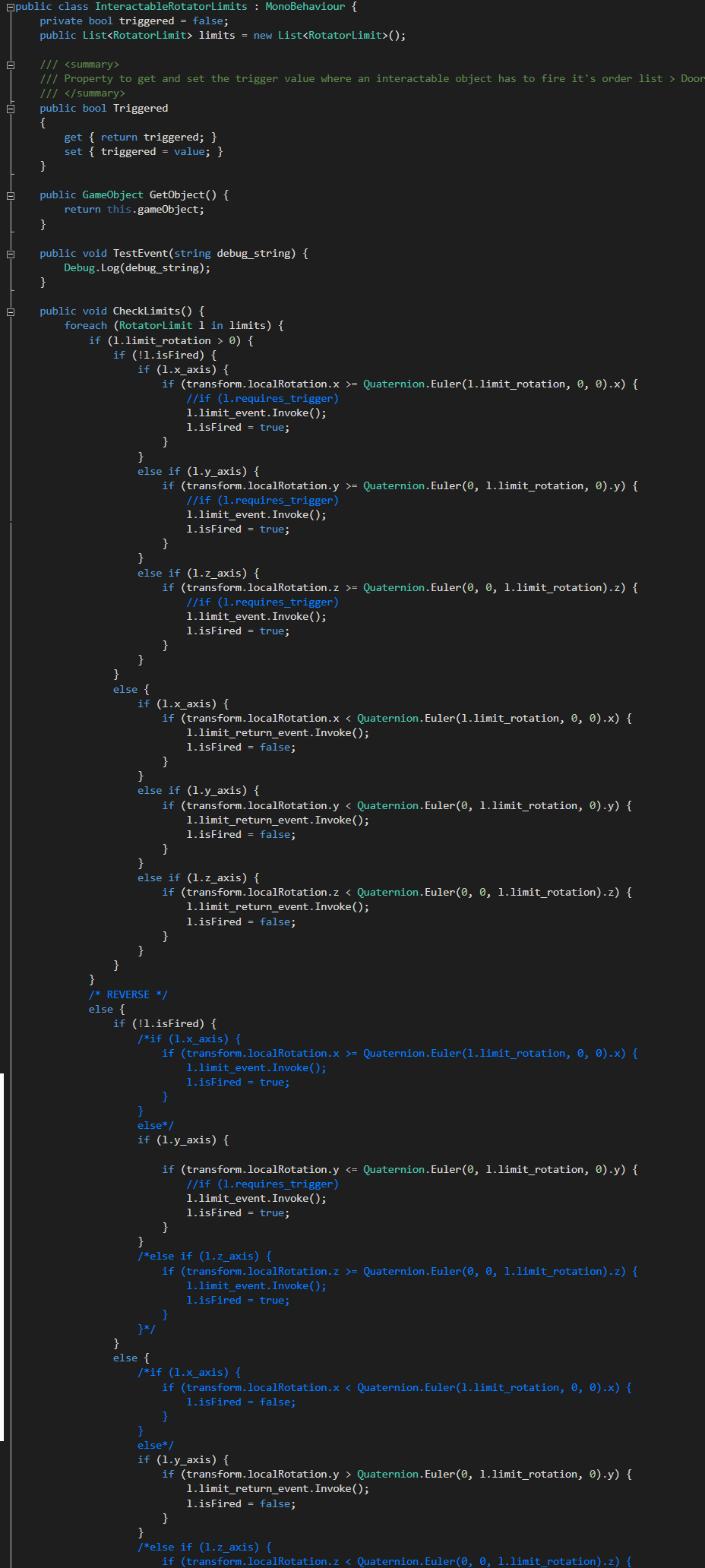

Instead of writing a functioning class for each of the interactable objects I wrote a single class that can take any amount of events at certain points of the rotation. For example: the airlock handle has to close the door once it passes 20 degrees over the y-axis and then it has to fire the function to close the door. The main handle has to fire an event once it passes 80 degrees over the x-axis and another event once it passes 170 degrees. Plus, it has to fire two more events once it passes 170 and 80 degrees on the way back. For that purpose the class should also have a field for events on the return. A container for the event and a return event and beyond what degrees on what axis is a class of itself. The main handle would have two of these containers for the two degree checks it passes through. This doesn't have any logic for firing the events and when they should do so. Therefor a handler should come into place which is the class that a handle will receive. This class keeps track of the current rotation of the object and checks wether it passes one of the limits set in the above class. Not the most efficient and clean code, given that this has to run all the time for each event and again for each interactable object. But it works. At the time I was not yet completely sure how delegates works and how you can subscribe to certain events. Which would've been an excellent, more efficient, way of handling the objects.

The door handler has a list of InteractableRotatorLimits which represent the four objects on the door and it calls the CheckLimits function through the Update for all of these objects. With the knowledge of delegates I would have taken a different approach and make the RotatorLimit class a delegate for the door handler which could then subscribe to events like passing a certain amount of degrees. In the door handler the functions for the events can be implemented. On that note, all of the interactable objects have a HingeJoint component which already has data for the limits and the current rotation relevant to these limits. Instead of having to write the whole CheckLimits function myself I would take these from the HingeJoint component. At the start of the project I was under the impression that we would receive an SDK development kit with whatever hardware we would use and that most of the functionality would have to be programmed ourselves. However, luckily, it appears that an individual has taken it upon himself to develop a generic toolkit that integrates all the available virtual reality hardware into Unity, the VRTK (Virtual Reality Toolkit). This makes developing for virtual reality a means of adding components to objects instead of attaching wholesome, self-written scripts.

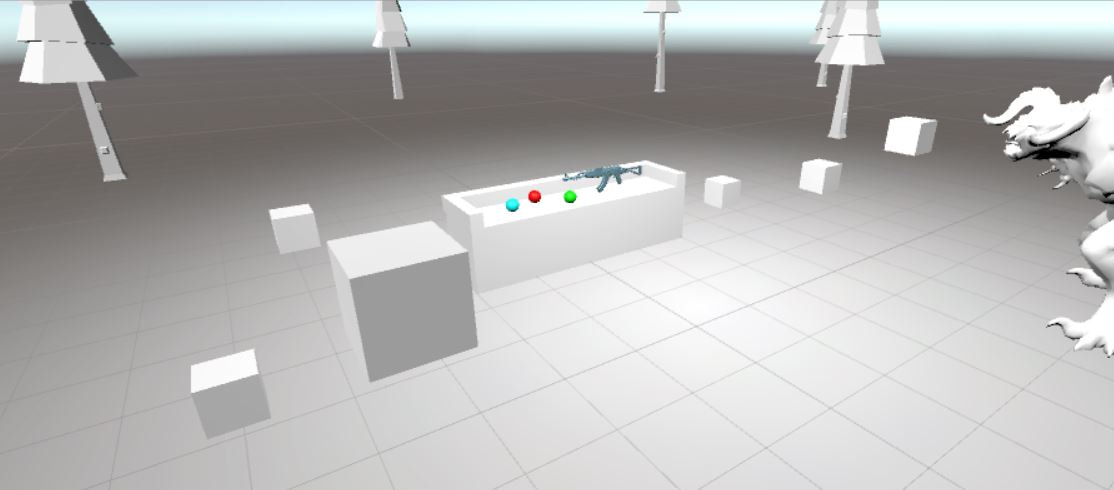

For our project we chose to make use of the HTC Vive. After following some of the avaible tutorials on using VRTK, from the author himself (link to YouTube channel) I quickly had set up a scene with a few interactable objects that could be tested in virtual reality. To familiarize myself with the available functions and how most of the underlying scripts work I set out to make a simple interaction with a few coloured balls and colourless cubes. When the player picks up a ball and touches a white cube, the cube takes over the colour of the ball. Simple enough and quickly made! I wouldn't be myself if I didn't set out for a bit more of a challenge however and started delving in the scripts behind VRTK and see if I can make extensions to this. Taking a look at different projects and reading over the VRTK documentation I managed to write a script that extends on existing VRTK scripts that when the player has hold of an object and touches something with that object the controller gives feedback by shaking. Even though the objects aren't physically there, this simple form of feedback gave the sensation you were in fact touching something. At that moment it baffled me how easily you can trick the brain in virtual reality. Something that would come in useful in later stages this project. Armed with a lot of new knowledge I was excited to work on more challenges feats and set my eyes on a working automatic weapon, including all the small working parts like removing the magazine, pulling the cocking mechanism and firing. For this I had to not only read up a lot on using VRTK but I've learned a lot of already existing Unity functions aswell. All of which can be put to good use both during and after this semester. With a good set of knowledge about developing virtual reality applications in Unity I set this personal project aside and started working on the KLM assignment: making an operatable aircraft door in virtual reality. September 26th the team visited the KLM training and simulation department. It's here where all the simulators are situated and the cabin crew is trained on how to respond to different situations (called normal and abnormal situations) that can occur in and around an airplane. The main reason of our visit was to attend a door training. Twice every year the cabin crew has to come to the location to show that they're still able to open the airplane doors according to regulations. For every type of aircraft the KLM owns, a small portion of the plane around the door has been recreated. The trainer operates a computer that controls the way the door should handle and the different type of (ab)normal situations that should happen. The trainer can, for instance, set the outside conditions to misty or a fire breakout inside the airplane. Or technical malfunctions like the door handle not responding, powerassist not functioning or the slide not inflating after opening. These are all situations the cabin crew has to train for because all of these situations come with different procedures. During these trainings we had the oppurtunity to watch the cabin crew operate the doors in different stressful situations and learned that opening an airplane door is not just a matter of turning the handle and pushing open the door.

We learned that there are multiple handles that operate the way the escape slide inflates and how the door is released once it's locked. Aswell that the door has a powerassist option where the operator does not have to put as much force on the door as without. This is something that ignited a discussion within the team. How are we going to replicate this on our physical door? And how are we going to animate our virtual counterparts? The door consists of a lot of different moving parts. At the end we sat together with the product owners to have a small discussion about the whole day and what they expect to see in the end product. We came to the conclusion that we either should go for a full immersive experience were the focus lies on the stressful situations and how to respond to these. Or focus on creating a physical door that works together with the VR world so that the users create a form of muscle memory to operate the doors. We walked away with a notebook full of notes and useful knowledge. |