|

On of the criteria to pass the minor is that each student gives the class a lecture about a subject they've been busy with for the past months. Optimizing your application and increasing the overall performance was something that has kept me occupied for a while because our own project had some performance issues aswell. Working on this topic for a while has taught me a lot of little details about the Unity engine's workings. So on I decided to share this knowledge with the class and give a masterclass about performance and optimization in Unity. Subjects I went into detail about are: Rendering, object batching, the profiler and frame debugger, culling and light baking. I closed with some general tips and tricks to up your application's performance.

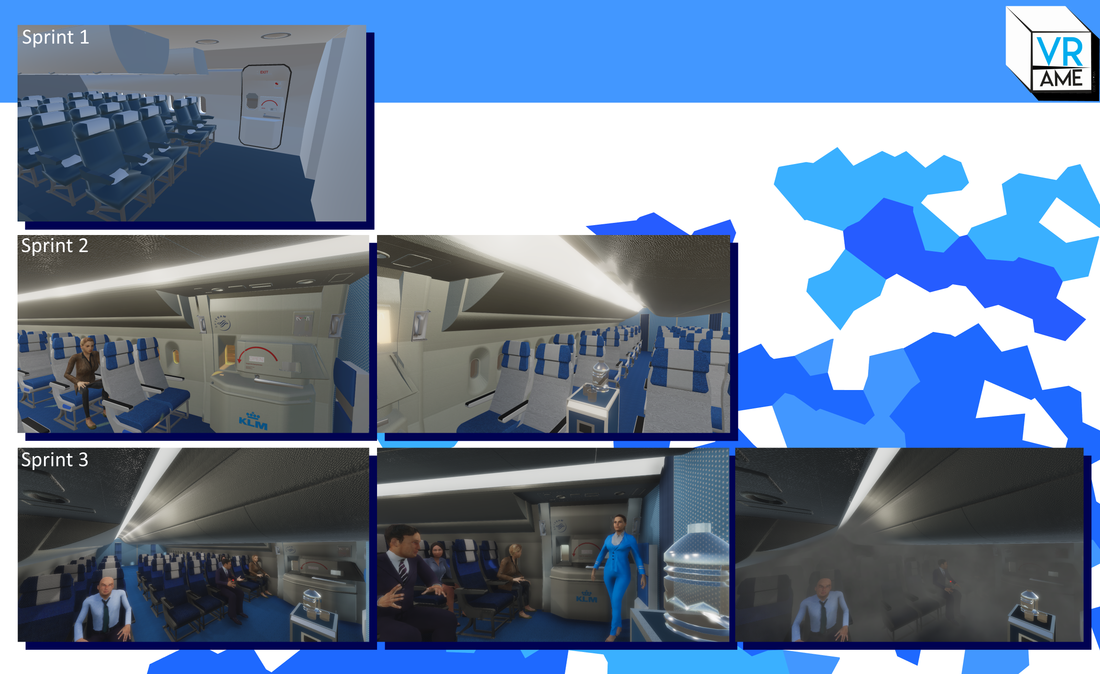

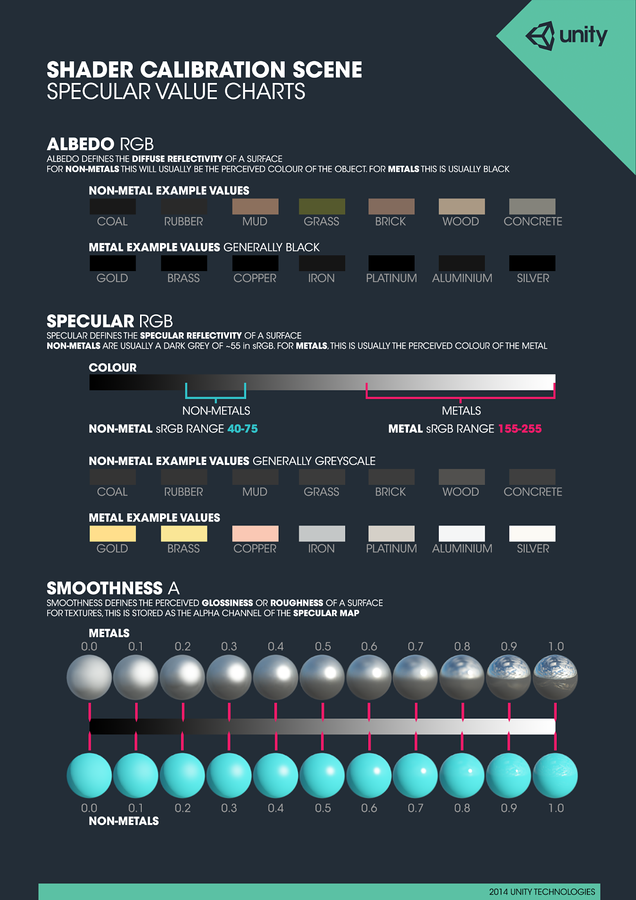

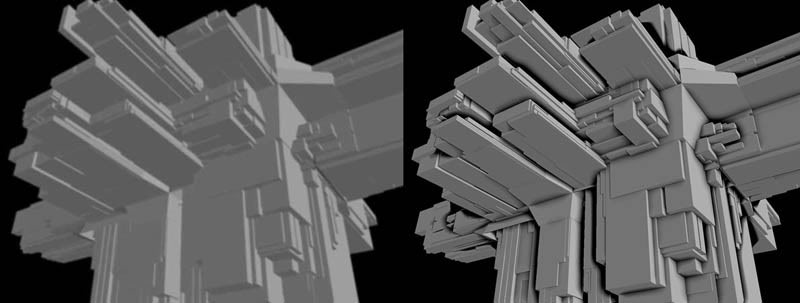

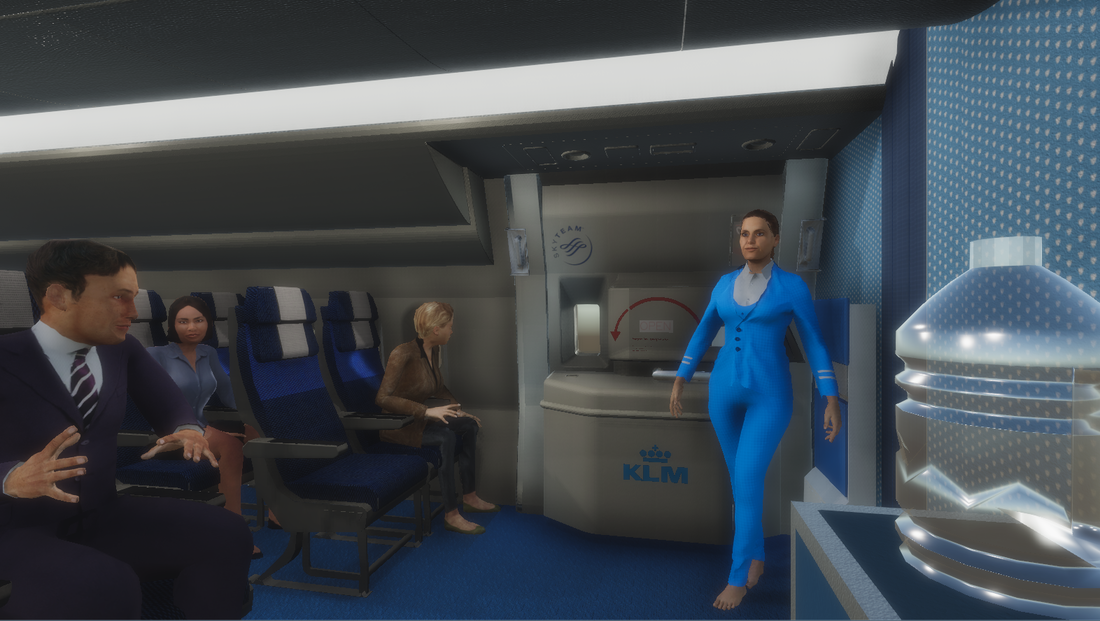

The full presentation can be found here: Performance and Optimization in Unity3D. Reception was good and more students showed up than I had anticipated. I generally enjoy teaching others about something I'm interested in but I tend to dawdle on irrelevant subjects while I should continue with the topic instead. This was a good moment to reflect on my goal of working on my communication skill and the feedback I received during the earlier peer assessment about talking slower. After the class I asked a couple of students what they thought about my way of talking and way of explaining things. Most answered that altough I talk a bit fast I was clear and understandable and explained everything thouroughly to understand. The presentation supposedly had to be filmed so it can be assessed at the end of the minor. However this was stated only after I had given my masterclass. Over the course of the minor I've been continiously busy with improving the graphic fidelity of the simulation while still keeping a steady performance for VR. Sprint 1 was mainly a prototype set up to learn Unity to the other teammates and give an idea of what we want to create for the product owners. During sprint 1 and sprint 2 I was working on creating a more realistic atmosphere. One you would encounter inside of an airplane. The feeling I described the airplane with was "clean and matte" as a smooth seemingly plastic surface that up close is actually more rigid. The result of sprint 2 rather encapsulates this feeling but something was still off. At that point we got somebody with extensive experience in Unity and rendering from outside the school to look at our project, Nico. In his spare time he's been busy with rendering realistic scenes in the Unity engine through use of the Physically Based Rendering (PBR) concept. PBR is a way of managing materials and lighting so that it mimics that of the real world. The idea is that one creates a room with lighting settings that come close to how lighting behaves in real life. Inside this room there are a few objects with materials assigned to them (plastic, wood, metal) that make use of the proper values. Each material has a certain value on the specular map. You keep tweaking this room until it's almost indistinguishable of the real world. This is called your reference room. Whenever you create a new model and texture you place this inside the room. If it looks like it belongs in the room you know you've done good. However, if something seems off you know it can't be the material or lighting so you'll have to make changes to either your model or the texture. Nico also explained the difference between the forward en deferred rendering paths and how they impact the visuals of your game. Aswell as rendering in linear space and gamma. Both of which have better results when properly used. Especially for the lighting. We've also explored post processing effects and how you can add these to your Unity project. They used to be a standard asset, available from the editor itself. But it has moved to the Asset Store instead (link). The Post Processing Stack adds effects like Anti-Aliasing, Bloom, Ambient Occlusion, Color Grading and Color Correction. In our case ambient occlusion gave the whole scene that extra touch of realism by adding darker shades where lighting can't reach. AO is calculated from using the depth buffer and from there on out it applies a pseudo shading to those areas. To further improve performance and overal look we also asked the Game Assets class expert, Freark, for some tips. He mentioned that baking your lighting has great positive effects on your performance and depending on the lightmap even looks better than dynamic lighting. However once we started baking our lighting, black spots appeared all over the airplanes interior. For a while we just assumed it's not possible with other settings we have but after some research we discovered that this happens due to the UV-map of a model not being properly unwrapped. Faces on the UV map would overlay which, of course, would result in an overlapping lightmap aswell. Explaining the black spots. We decided to leave light baking for a later stage because unwrapping the textures for the airplane appeared to be a lot more trouble than anticipated due to the fact that it consists of a lot of individual models that have incredibly tiny faces.

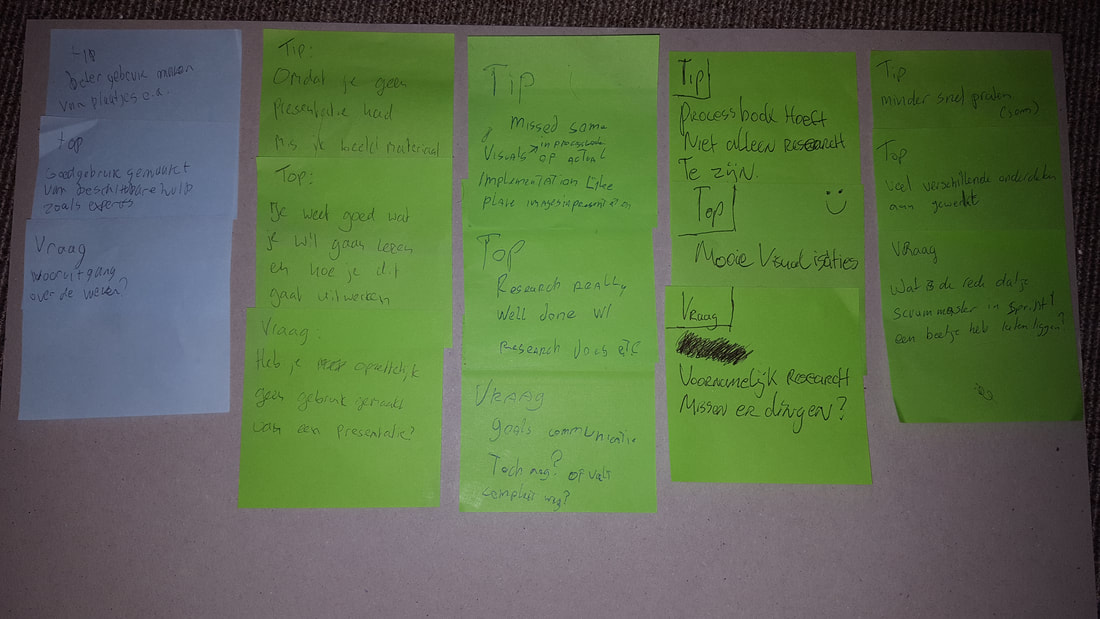

Overal we came to a pretty realistic seeming scene which runs at a steady 50 - 60 fps (if nothing is happening) in the VR headset. The students had to prepare a small presentation about their work and process up to this point. Two other students received the processbook of another student and had to rate it according to an assessment rubric provided by the faculty. After the presentation the student received feedback on both the presentation and the current processbook that the other students looked at. This way the student gets an idea of how the final assessment will be conducted and has time to reiterate the current process book. I didn't prepare any visuals for my presentation. Instead I showed this blog and talked a bit about the process behind some of the topics. At the start of the semester I set goals of being more communicative with my team and through being the Scrum master and mediator I reckon I was able to develop my communication skills. Other goals were more generic like: developing for VR, learning more Unity functionalities. These two are an ongoing process. Instead I talked about how I've been busy with increasing performance for VR applications in Unity and how to simulate sound in VR, keeping in mind the laws of air and material resistences. Feedback constited mostly of that I should have prepared visuals with the presentation and that I should try talking at a slower pace. Another worthy mention was that it is good that I do so much research into my topics but that my process book should also mention the smaller details and works.

Today we scheduled a visit at the KLM training offices with our product owners who would make sure a couply of stewards would be present. For this day we prepared the demo to include a voice over that gives simple instructions to the trainee to slowly introduce them with virtual reality before going to the door procedures. The trainee will be tasked with opening the door, closing the door, arming the slide and opening the door again with the slide armed in place without any prior instructions of how the door works (as they should already know these procedures from the real world training). As a second test we asked the steward to remain seated while the airplane cabin fills with smoke (this event was not communicated to them beforehand to keep the act of surprise). We had planned to equip the trainees with a heartbeat sensor to get an idea on how more stressful the smoke scenario was compared to the starting scenario where nothing happens. A user test document can be found here: User Test (full). After the test we asked the testers to fill in a questionaire (can be found in the user test document). Feedback was promising and we were notified of some details we had missed.

General reception was that it looked incredibly good and users felt engaged and immersed due to proper use of audio cues. The questionare results can be found here. We took all feedback into consideration and changed our next sprint planning accordingly to accomodate for the missing features. One of the scenarios that could happen inside the airplane is the cabin filling with smoke due to inside or outside fire. This changes how the stewards should operate the door and work with the passengers. Not to mention this adds a lot of stress to the situation as a whole which could impact on how the steward handles and reacts. For these reasons we decided that a "smoke situation" would be one of the first scenarios to work out.

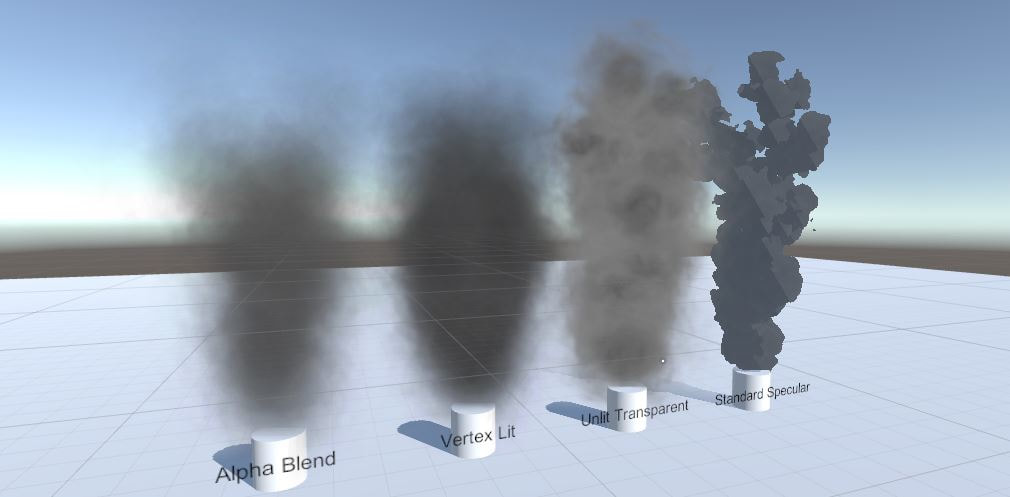

Making particles in Unity is fairly easy. Making performance efficient particles that look good is a lot more difficult. Especially when creating for VR applications where the rendering path is different. This all depends on what shader material is assigned to the particles texture. To test the different results on looks and performance I created four different particle systems to compare. Alpha Blend: After rendering the screen the alpha value of the texels are calculated to see what color is behind the texture. The two values are blended together to give the illusion there is transparency. Altough giving good looking results, Alpha Blending is relatively heavy on the performance. Vertex Lit: This shader only calculates light over the vertices of the mesh or texture. Therefor pixel-based rendering is not happening. As a result the center of your image will appear a lot darker than the corners, dependent on the size of the image and the distance to the light source. On that note, the vertex lit shader is incredibly low cost and produces decent results. As an added plus it changes color dependant on the direction of source lights. However, when in complete shadow it appears as complete black. Unlit Transparent: This is a combination of the latter two where transparency is calculated over the texels with lower alpha values. Lighting however is not calculated and the resulting product is a smoke plume that looks like the provided texture. This means that no mather the direction of the light source. The smoke will always appear the same as if it's receiving an equal amount of light from all corners. Standard Specular: A shader that is generally used for Physically Based Rendering and metallic surfaces due to the way it handles lighting calculations and applying gloss. With the cutout setting it takes away a percentage of the transparent edges of the smoke texture giving more rigid outlines. Though efficient and having a nice cartoon look it doesn't convey a proper smoke feel. The reasoning behind trying this was that it reduces the drawcalls to calculate the transparent layers which became an issue when placed inside the smoke cabin. However the thick layer of smoke completely hid everything in sight the moment the smoke came in. Given the fact that we're currently pushing the concept of making the simulation look real we chose to make use of the alpha blend shader for the smoke. The impact in the airplane scene is pretty high but a lot of overhead comes from rendering the plane itself because most of the meshes aren't properly grouped and optimized. Redoing most of the models is on the to-do list. After that, depending on the resulting runtime performance, we can change the smoke shader. One of the goals for the project was to implement a form of mixed reality. Mixed reality is the idea of combining the real world with the virtual world. Walking around in VR space where the walls are in the same location as the real world is a form of mixed reality. In our case we wanted to translate the airplane door to the virtual space so that the trainees would actually have to hold on to a door handle. Enforcing the idea of opening the door and developing muscle memory, hence it is a training simulation. Research started with figuring out how the HTC Vive controller work and how they are tracked within Unity projects with the use of the VRTK toolkit. The Vive website has an extensive article of the inner workings of the controllers (link) outlining the guidelines for developers. The SteamVR plugin handles all of the tracking and correctly showing the controllers in Unity. Therefor to get the position and orientation of the controllers is as easy as retrieving the transform component from the controller objects. No extra work. I explored a few options on how to track other objects in virtual space. This could be done with the Vive controllers itself or third-party tracking sensors. However the latter would require different products and another toolkit next to the already in-use SteamVR and VRTK toolkits which is an unnecessary overhead. With the available resources I went with the first approach of using the Vive controllers. The Vive had two options when it comes to tracking. The Vive controller and the Vive trackers (as pictured above). The Vive controllers are mainly used for interaction from the player in the virtual space. However the trackers have the benefit that they can be mounted on any object making that object trackable in VR. At this point in time the trackers are sadly not available to consumers yet. So we had to make do with two Vive controllers. One for the player and one to track an object. To create the illusion that an object in reality is also in VR the position, shape and scaling all have to be the same for it to feel real. For the example I used a bottle of terpentine that had a fairly generic shape and recreated that object in VR trying to get the same dimensions. One problem arrose: the player needs their hands free to pick up the bottle and the only free hand is the one not being tracked. With the headset on the player would not know where his or her hand is. So a new question took priority: how can we track the show the player's hands position in VR while keeping the hands free? During research into the Manus VR gloves a picture of an early development build of the gloves came up where Vive controllers were attached to the users forearms. This gave us a direction to experiment with. Over the weekend I created a simple controller mount with scraps of foam and velcro and developed a scene in Unity that has a table, with the same dimensions as the table in the VR room, and a bottle, to which the other controller is mounted. Now the position of the users hand is approximately being tracked and has the hands free. And the object is being tracked. That is all there is to it given that the tracking of the controllers happens already, the positions of objects is by itself already in the same position as in reality. The picture above is the resulting product. The user no long has the ability to use any of the buttons on the controller but has full control of their hands. Given that the human brain is excellent in replacing your arms with some other appendage, simply having a sphere in the headset as your arm is enough.

I asked a couple of students to try out the project with the bottle and all of them mentioned that it works better than anticipated. Even though the users don't see a proper hand in VR, the fact that they can see their hand is close to the bottle they instinctively open their hand to grab it. It felt natural. With this conclusion I started researching interaction with more complex objects for mixed reality purposes. In case of the KLM project: a door handle.

Having arrived with the whole team we sat around the table with one of the co-founders and briefly discussed the project we're developing and the benefits of using the Manur VR gloves. After a tour around the office we got a hands-on with the gloves themselves. The development team has created a small room with some objects the user can toy around with. Some balls and cube to pick up and throw around, a pole, a sort of DJ table with records that can be span and a few buttons.

During one of the Virtual Technology classes the teacher mentioned that the human brain is great at substituting limbs that aren't yours and/or connected. Seeing your hands and fingers in VR move the way you move them in reality with the Manus Gloves is a great example of this feat. The moment I put on the Vive headset it felt natural. Instinctively you close your fingers to pick up objects and the same goes for in VR. When pressing buttons, we generally do so with our index fingers and once again, same works in VR. The gloves work as intuitive as we had researched beforehand and interaction with the world comes natural without having to explain the user any controls or mechanical rules. While the other team members got their chance to try out the gloves I had the chance to talk with one of the developers. The Manus VR team works with Unity3D aswell and provide an extensive library that comes with the gloves.

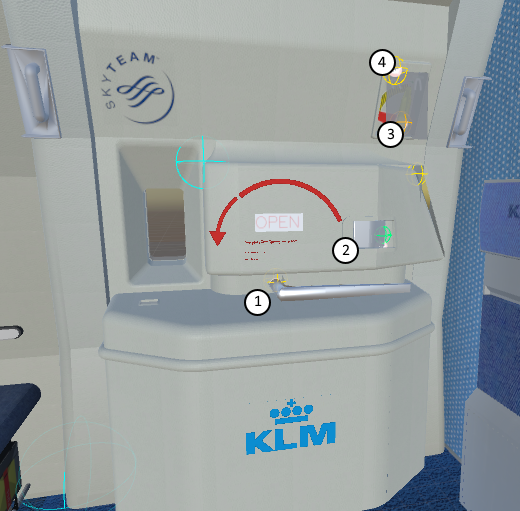

Knowing this and having experienced the gloves ourselves we we're convinced that adding Manus VR to the project is a worthwhile investment. Both for KLM as for us. The KLM and it's employees have a much more accessible simulation experience with a drop-in drop-out principle where controls don't have to be explained. And for us to work and learn with a new technology and thereby also discovering and research new topics made possibly by using the gloves. In the field, will the gloves really give that extra immersion? Will it still feel right without actually having the physical objects present? The door of the Boeing 777 has four interactable objects and all of them have to trigger something else when they are turned beyond a certain point. The main handle is the openening and closing handle of the door. It turns over 180 degrees from right to left. Halfway through the whole door should be lifted up from it's hinge and once pulled all the way over the door should open. This is four events for this handle alone. From right to left: lift hinge, open door. From left to right: drop hinge, lock door. The airlock handle is the handle that locks the door in place once it's opened so a gust of wind can't accidentally push the door back to it's closed position. This handle has only one event that can be fired when the door is completely opened. Once pulled it should close the door after which the main handle takes over.

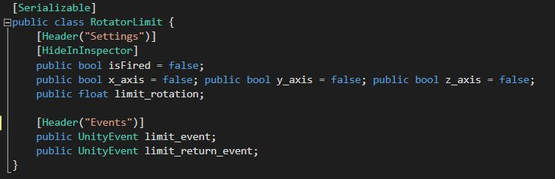

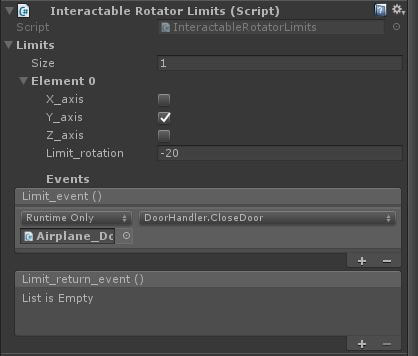

Instead of writing a functioning class for each of the interactable objects I wrote a single class that can take any amount of events at certain points of the rotation. For example: the airlock handle has to close the door once it passes 20 degrees over the y-axis and then it has to fire the function to close the door. The main handle has to fire an event once it passes 80 degrees over the x-axis and another event once it passes 170 degrees. Plus, it has to fire two more events once it passes 170 and 80 degrees on the way back. For that purpose the class should also have a field for events on the return. A container for the event and a return event and beyond what degrees on what axis is a class of itself. The main handle would have two of these containers for the two degree checks it passes through. This doesn't have any logic for firing the events and when they should do so. Therefor a handler should come into place which is the class that a handle will receive. This class keeps track of the current rotation of the object and checks wether it passes one of the limits set in the above class. Not the most efficient and clean code, given that this has to run all the time for each event and again for each interactable object. But it works. At the time I was not yet completely sure how delegates works and how you can subscribe to certain events. Which would've been an excellent, more efficient, way of handling the objects.

The door handler has a list of InteractableRotatorLimits which represent the four objects on the door and it calls the CheckLimits function through the Update for all of these objects. With the knowledge of delegates I would have taken a different approach and make the RotatorLimit class a delegate for the door handler which could then subscribe to events like passing a certain amount of degrees. In the door handler the functions for the events can be implemented. On that note, all of the interactable objects have a HingeJoint component which already has data for the limits and the current rotation relevant to these limits. Instead of having to write the whole CheckLimits function myself I would take these from the HingeJoint component. Audio is always an important part of a game, even more so in training simulations. Generally audio is done binaural, panning between the left and right ears to give a sense of hearing the direction of which the sound is emmitting. The last Virtual Technology class' subject was about spatial audio in Virtual Reality. With Virtual Reality it becomes that much more important to have a proper audio system to immerse the user into the world. There is a lot to learn when looking at how sounds and the human ears work in real life. One of the biggest aspects is how sounds are perceived differently depending on the direction it comes from. So called HRTFs (Head-Related Transfer Functions) are a sort of filter that changes the way the sound is perceived (YouTube video showcasing the difference between audio with and without HRTF in Counterstrike: Global Offensive can be found here). Another thing to take into account is how sound travels through space. Air itself already has effect on soundwaves, but so does every other material. Concrete absorbs and transfers sound differently that a piece of cloth does. There's even a difference in concrete that's connected to the world or disconnected. The students were tasked with finding a way to replicate some of these features in Unity and see how Unity handles audio. From itself Unity has a rather lacking audio feature. There's an option to enable 3D sound which opens up the option to use spatial audio, panning, rolloff, doppler effect and some other settings. Above all of this, hidden in the project settings, the audio source can be set to make use of HRTF filters, provided by Microsoft. Unity audio is relatively wholesome for simple average projects but lacks in features like reverbing sound from surfaces and materials. For our project we wanted to delve deeper into audio and Virtual Reality.

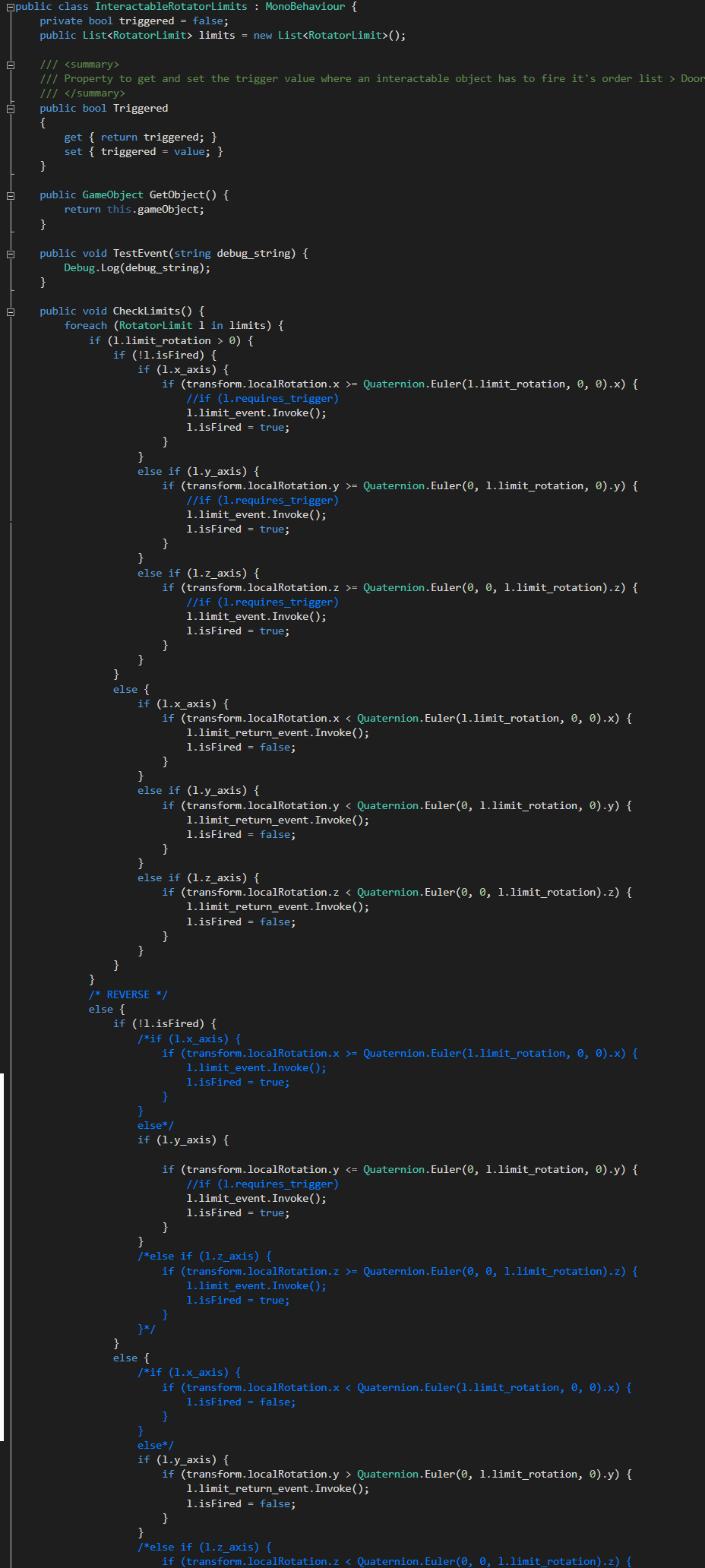

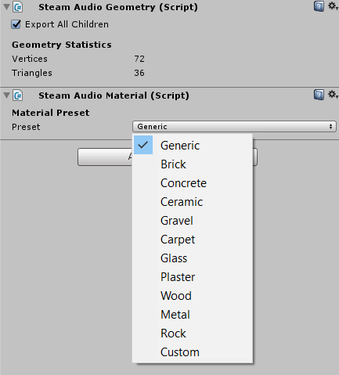

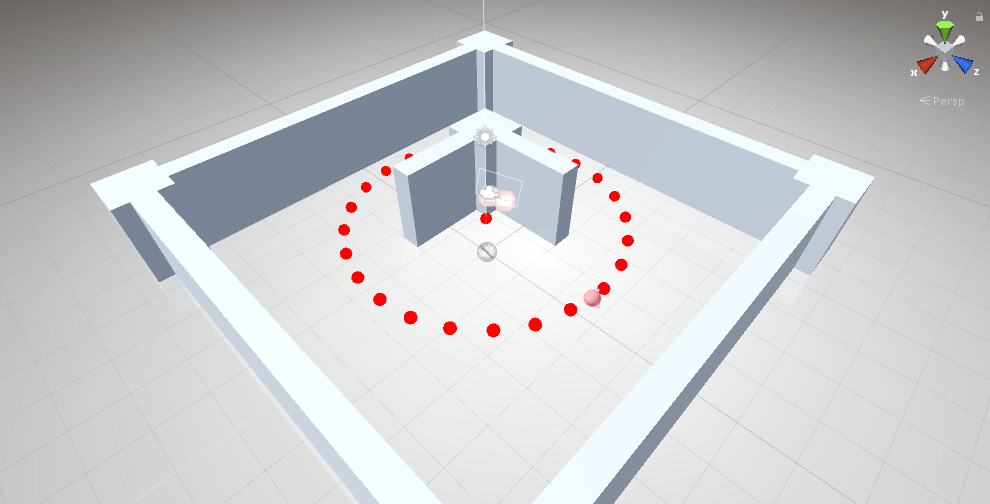

To demonstrate the differences and pros and cons between Steam Audio and Unity audio I created a scene with a concrete room and a few walls. Within this room there are two spheres that can be turned on and off. One uses Steam Audio and the other uses Unity audio. The spheres circle around the player, passing behind the concrete walls in its path. In case of the Unity audio sphere no difference can be heard when the sphere dissapears behind the concrete walls in the middle. The only noticible feature is the HRTF filters and the direction the audio comes from. The Steam Audio sphere on the other hand loses a lot of it's volume and higher pitches once it travels behind the walls. Sound becomes more clear once the sphere reaches the corners of the walls before being visible again. During the next Virtual Technology I let the teacher and some other students try the application and listen to the differences. With both spheres everybody was able to tell where the audio was coming from. Wether it was above or below them. The added value of Steam Audio however is the fact that the perceived sound changes based on the position of the source and the objects between the source and the player. Steam Audio offers more features like placing sound probes in the rooms where sound can in fact travel to better replicate the HRTF filters. For the KLM training simulation we decided to continue using Steam Audio. An airplane muffles sound in an interesting manner due to use of materials and shapes and Steam Audio has the power to simulate these filters.

For the class Virtual Technology I was tasked to do research into a subject of choice, related to virtual or augmented reality. During that time the team has been discussing the idea of having gesture recognition in the training simulation as a means of communicating with other characters. Gestures and poses to block passengers from passing, beckoning passengers to move over here or sending them somewhere else. We have discussed this concept with the KLM aswell and they mentioned that it would not have any priority to the training but could be an extra functionality towards pushing the realism of the training. Gesture recognition in virtual reality is relatively new and not a lot of information is available as of yet. A few games have implemented a basic version of gesture recognition where the players can wave at a character and the character will wave back. Another example, Left-Hand Path, takes it a step further and implemented different motions to cast different spells. This would require a form of recognizing the different motions of the controllers and compare them to a library of pre-set motions. After having done some research into available tools and examples (the full research can be found at the bottom of this entry in pdf-format) I called upon the VR Technology teacher, Juriaan, to discuss gesture recognition. Juriaan mentioned that there is a distinct difference between gestures and poses. A pose defines a stationary, single frame, stature. Like crossing arms in front of you. A gesture is a series of poses over time. A motion like beckoning. Looking for a gesture would require having knowledge of the position and orientation of the controllers and having to compare these to pre-set values of a gesture. A problem with this is the decrepancy in margins of the motions. Someone could make a beckoning motion while having the controller pointed downards while it is meant to trigger an event when it is pointing forwards. It's a difficult task to make a clear difference between when something is a gesture or an accident. For the time being I have set this aside as more pressing mathers are at hand. However, we still keep the idea in tha back of our heads should the project be at a state it is finished and we have spare time to explore gesture recognition more. Gesture Recognition in Virtual Reality

At the start of the project I was under the impression that we would receive an SDK development kit with whatever hardware we would use and that most of the functionality would have to be programmed ourselves. However, luckily, it appears that an individual has taken it upon himself to develop a generic toolkit that integrates all the available virtual reality hardware into Unity, the VRTK (Virtual Reality Toolkit). This makes developing for virtual reality a means of adding components to objects instead of attaching wholesome, self-written scripts.

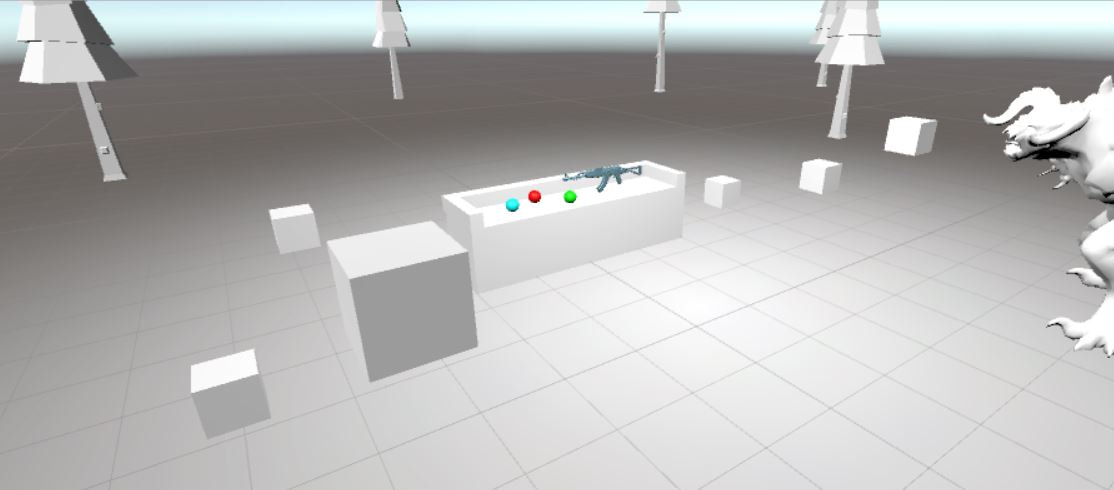

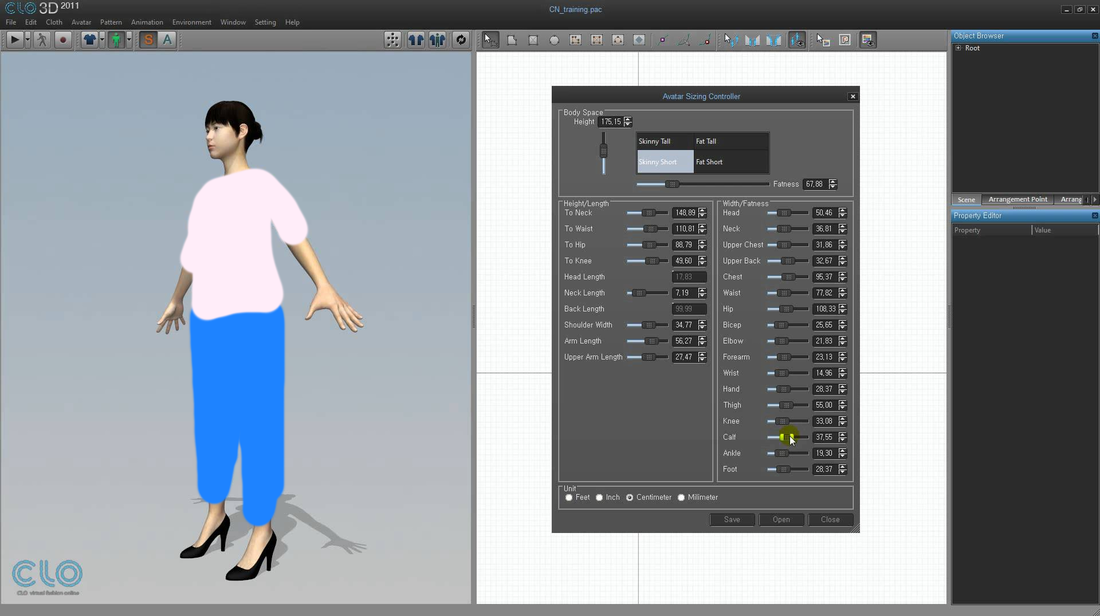

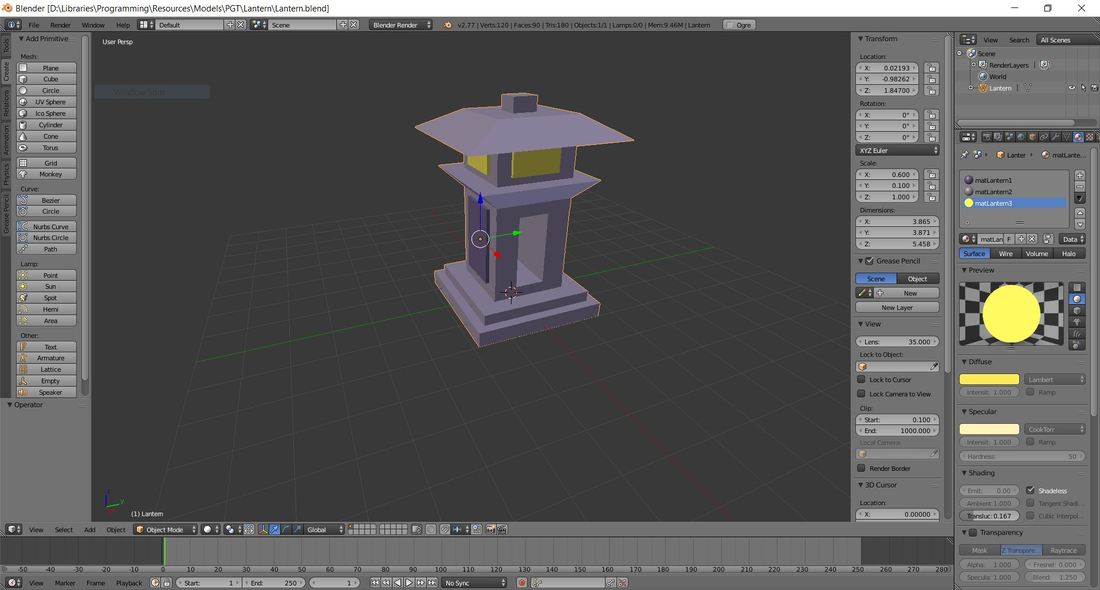

For our project we chose to make use of the HTC Vive. After following some of the avaible tutorials on using VRTK, from the author himself (link to YouTube channel) I quickly had set up a scene with a few interactable objects that could be tested in virtual reality. To familiarize myself with the available functions and how most of the underlying scripts work I set out to make a simple interaction with a few coloured balls and colourless cubes. When the player picks up a ball and touches a white cube, the cube takes over the colour of the ball. Simple enough and quickly made! I wouldn't be myself if I didn't set out for a bit more of a challenge however and started delving in the scripts behind VRTK and see if I can make extensions to this. Taking a look at different projects and reading over the VRTK documentation I managed to write a script that extends on existing VRTK scripts that when the player has hold of an object and touches something with that object the controller gives feedback by shaking. Even though the objects aren't physically there, this simple form of feedback gave the sensation you were in fact touching something. At that moment it baffled me how easily you can trick the brain in virtual reality. Something that would come in useful in later stages this project. Armed with a lot of new knowledge I was excited to work on more challenges feats and set my eyes on a working automatic weapon, including all the small working parts like removing the magazine, pulling the cocking mechanism and firing. For this I had to not only read up a lot on using VRTK but I've learned a lot of already existing Unity functions aswell. All of which can be put to good use both during and after this semester. With a good set of knowledge about developing virtual reality applications in Unity I set this personal project aside and started working on the KLM assignment: making an operatable aircraft door in virtual reality. The virtual reality minor offers four additional in-depth classes, two of which the student has to follow. Virtual Fashion goes into the use of the Clo3D tool and how to simulate cloth physics. UX Interaction Design touches on the subject of user interaction in virtual reality and setting up user tests. The two final classes have a more technical approach. Virtual Technology teaches about the general virtual reality hardware and Virtual Game Assets is about creating for virtual reality (programming and modelling). For this semester I've enrolled into the Virtual Technology and Interaction Design classes. Virtual Fashion does not have any relevance to our project nor does it interest me personally. I'd rather learn new things and put effort into different subjects as opposed to learning a software tool which I'll most likely will never use again after this semester. Virtual Game Assets is in my field of interest. However the class is about working with Unity3D and the Maya modelling tool. Both of which I'm already well experienced with. Therefor I chose not to follow this class. Interaction Design on the other side is relevant for the KLM assignment. The team will have to test a lot of different cases with the KLM target group to assure the simulation is as close as possible to the real thing. Learning how to set up proper user tests is of great value. Virtual Technology is the class I had most interest in and was looking forward to. Knowing what the available hardware is able to do or how to tackle certain hardware related issues comes in useful. For example; our team likes to make use of a form of mixed reality by creating a physical door that can be operated from within the virtual world. All while making use of the Manus VR Gloves. The teacher has already informed us a lot on this very subject. Below I'll describe the first two classes of each masterclass as they were mandatory to follow and provide the neccessary proof to show my competence in these classes. Virtual FashionThe first two classes were really useful in the sense that I did not attend these classes. During these periods I worked on getting to know the VRTK tool kit and how you can create interactions with objects in VR which can be read more about here: Virtual Reality and Unity. For the sake of providing a form of proof that I know how to work with the Clo3D tools taught in the masterclasses I painted this beautiful relevant dress: Virtual Programming and Game AssetsThe start of this masterclass consisted mainly of learning how to use Unity3D and the Maya modeling tool. We were assigned to create our 'dream house' in Maya and import that into Unity. Given I don't have any experience in Maya. But prior to this masterclass I've been using Unity and the Blender modeling tool for a couple of years and did not take part in the dream house assignment. Instead I went around the class to see if I could help other students with Unity. Pictured below are two models I created for an earlier school project. Interaction DesignThe introductionary classes talked about general user experience design and what differences there are with those in virtual reality. We were paired with other students and had to create a piece of media in where each one of us would introduce themselves and how it should play out in VR. For this we made use of a 3D camera and took a picture outside with all of us standing around the camera. On each of us there is a textbox with our names and study faculty and the idea was that in VR a user can approach any of us and initiate a conversation to know more about that student. Luckily working out the whole concept was not necessary. Virtual TechnologyThis masterclass is the one that interested me most. The first two classes went into the inner workings of the VR headset and a bit of history in VR and AR. For this class I started two researches which can be found below: Every following class had a assignment like giving a presentation about how you could improve modern day headmounted displays or pitch ideas for new technologies. One of the presentations about audio in Unity can be found here.

September 26th the team visited the KLM training and simulation department. It's here where all the simulators are situated and the cabin crew is trained on how to respond to different situations (called normal and abnormal situations) that can occur in and around an airplane. The main reason of our visit was to attend a door training. Twice every year the cabin crew has to come to the location to show that they're still able to open the airplane doors according to regulations. For every type of aircraft the KLM owns, a small portion of the plane around the door has been recreated. The trainer operates a computer that controls the way the door should handle and the different type of (ab)normal situations that should happen. The trainer can, for instance, set the outside conditions to misty or a fire breakout inside the airplane. Or technical malfunctions like the door handle not responding, powerassist not functioning or the slide not inflating after opening. These are all situations the cabin crew has to train for because all of these situations come with different procedures. During these trainings we had the oppurtunity to watch the cabin crew operate the doors in different stressful situations and learned that opening an airplane door is not just a matter of turning the handle and pushing open the door.

We learned that there are multiple handles that operate the way the escape slide inflates and how the door is released once it's locked. Aswell that the door has a powerassist option where the operator does not have to put as much force on the door as without. This is something that ignited a discussion within the team. How are we going to replicate this on our physical door? And how are we going to animate our virtual counterparts? The door consists of a lot of different moving parts. At the end we sat together with the product owners to have a small discussion about the whole day and what they expect to see in the end product. We came to the conclusion that we either should go for a full immersive experience were the focus lies on the stressful situations and how to respond to these. Or focus on creating a physical door that works together with the VR world so that the users create a form of muscle memory to operate the doors. We walked away with a notebook full of notes and useful knowledge. |

|||||||||||||